October 30th, 2024

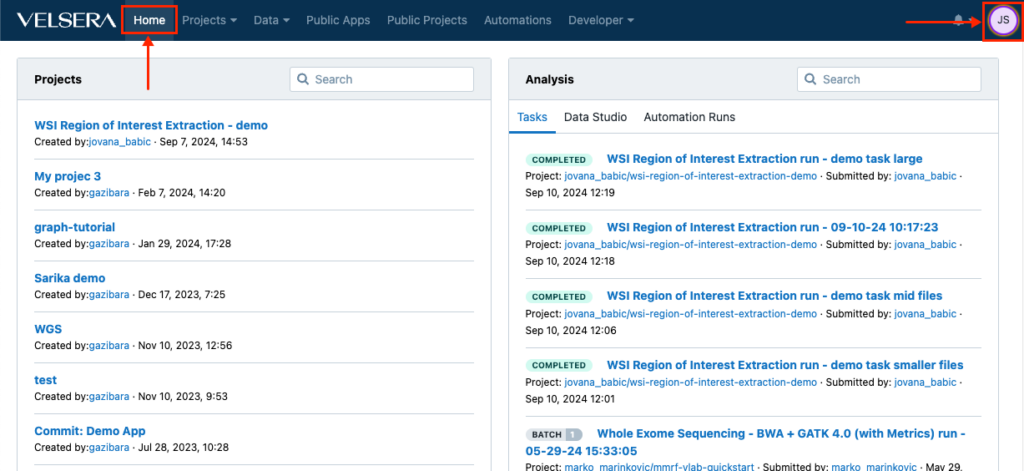

Changes in the main navigation

The main navigation now features a Home button which returns you to the dashboard at any given point. In addition, the icon for accessing the account settings now shows your initials instead of the username.

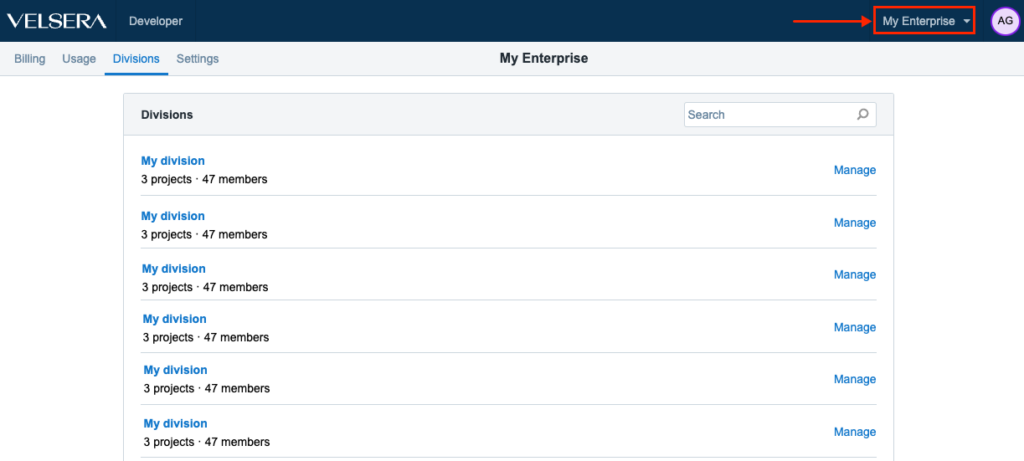

Improved UX for Enterprise users

We have introduced a small change in order to improve and optimize the UX for the Enterprise users. The menu for accessing Divisions is now in the upper right hand side, right next to the Account Settings.

October 28th, 2024

Nextflow – enhanced execution and scalability

We’re excited to announce powerful new features to enhance Nextflow performance, scalability, and resource optimization. These updates streamline workflow execution and expand support for advanced configurations, making it easier than ever to manage complex pipelines.

Multi-Instance Execution

Support for multi-instance execution allows Nextflow workflows to leverage multiple instances concurrently. This improvement enables increased parallelism and better resource utilization across workflows, resulting in significant performance boosts for complex or large-scale data pipelines.

Memoization (Work Reuse)

Introducing memoization for workflow tasks, allowing Nextflow to reuse previous results, significantly reducing compute time and resource usage. This feature is especially beneficial for iterating on workflows with minor changes, as previously executed tasks are reused when input data and parameters remain consistent.

Elastic Disk Support

Elastic disk capability allows dynamic scaling of disk resources based on workflow requirements. This flexible storage management ensures that high-demand workflows have access to the necessary storage without manual intervention, optimizing both performance and cost.

Instance Hints for Optimized Resource Selection

Nextflow now supports instance hints to provide tailored recommendations for instance types based on workflow needs. These hints help reduce costs and improve runtime efficiency by selecting the most suitable instance types for different tasks.

Real-Time Job Monitoring for Worker Instances

Enhanced job monitoring capabilities provide real-time insights into the status of worker instances. Users can monitor task progress and troubleshoot issues as they arise, leading to faster adjustments and a more seamless workflow execution experience.

Compatibility with All Nextflow Executor Versions (Post 21.10.0)

Full support for all Nextflow executor versions after 21.10.0 allows users to run workflows with the exact executor version they need, ensuring compatibility and reproducibility across executions. This enhancement allows teams to replicate their work consistently while taking advantage of continuous improvements in Nextflow ecosystem.

June 10th, 2024

Recently published apps

Somatic small variant callers for long read data, ClairS (0.2.0) and ClairS-TO (0.1.0) (for matched tumor-normal pairs and tumor-only data, respectively) have been published to the Seven Bridges Platform.

May 13th, 2024

Recently published apps

We have published the GCTA 1.94.1 tool on the Seven Bridges Platform. GCTA is a suite of tools for various genetic analyses using genome-wide data. GCTA (Genome-wide Complex Trait Analysis) was initially developed to estimate the proportion of phenotypic variance explained by all genome-wide SNPs for a complex trait but has been greatly extended for many other analyses of data from genome-wide association studies (GWASs)

May 10th, 2024

Recently published apps

snM3C pipeline

The snM3C pipeline is designed for profiling 3D genome structure and DNA methylation in single cell data as a part of the Human Cell Atlas and the WARP BRAIN Initiative.

The snM3C pipeline performs:

- Demultiplexing (by the Demultiplexing custom tool)

- Reads sorting (by the Sort custom tool)

- Reads trimming (by Cutadapt)

- Paired-end reads alignment (by Hisat-3n)

- Separating unmapped, uniquely aligned, and multi-aligned reads (by Separate unmapped reads wrapped around a custom script)

- Splitting unmapped reads by enzyme cut site (by Split unmapped reads wrapped around a custom script)

- Alignment of the unmapped, single-end reads (by Hisat-3n)

- Removing the overlapping reads (by Remove overlap read parts wrapped around a custom script)

- Merging mapped reads from single- and paired-end alignments (by Samtools Merge)

- Removing duplicate reads (by Picard MarkDuplicates)

- Calling chromatin contacts (by Call chromatin contacts wrapped around the custom script)

- Creating ALLC files (by Allcools bam-to-allc)

- Creating summary output (by Allcools extract-allc)

All tools are wrapped for the workflow specifically and use retagged us.gcr.io/broad-gotc-prod/m3c-yap-hisat:1.0.0-2.2.1 Docker image.

DeepSomatic 1.6.1

DeepSomatic is an extension of DeepVariant for calling somatic variants from matched tumor-normal data. The tool is still in active development and only WGS data is currently supported.

SortMeRNA 4.3.6

SortMeRNA is a local sequence alignment tool for filtering, mapping and OTU clustering. The main applications of SortMeRNA are filtering rRNA from metatranscriptomic data, OTU-picking and taxonomy assignation available through QIIME v1.9+.

dupRadar 1.32.0

The dupRadar tool is intended for duplication rate quality control for RNA-Seq data. It gives an insight into the duplication problem by graphically relating the gene expression level and the duplication rate present on it.

April 8th, 2024

Recently published apps

Here are the new apps published in our Public Apps gallery:

- ASCAT 3.1.2 tools (ASCAT prepareTargetedSeq, ASCAT prepareHTS and ASCAT). ASCAT prepareTargetedSeq prepares SNP references for ASCAT processing of targeted sequencing data. ASCAT prepareHTS prepares sequencing data (WGS, WES or targeted) for ASCAT. ASCAT infers tumor ploidy, purity and allele-specific copy number profiles.

- JAFFAL 2.3 tool. JAFFAL is used to detect fusion genes from long-read (PacBio and ONT) transcriptome sequencing with high accuracy, overcoming the challenges posed by higher error rates in long-read data.

- Ballgown 2.34.0 toolkit. Ballgown is a package designed to facilitate flexible differential expression analysis of RNA-Seq data. It also provides functions to organize, visualize, and analyze the expression measurements for transcriptome assembly

Apps with version updates

- StringTie 2.2.1 toolkit. StringTie is a fast and highly efficient assembler of RNA-Seq alignments into potential transcripts. StringTie Merge tool merges/assembles GTF/GFF transcript files into a non-redundant set of transcripts. This tool should be used after StringTie transcript assembling of each sample in the experiment.

April 1st, 2024

Recently published apps

We published the following ASCAT 3.1.2 tools in our Public Apps gallery:

- ASCAT prepareTargetedSeq prepares SNP references for ASCAT processing of targeted sequencing data.

- ASCAT prepareHTS prepares sequencing data (WGS, WES or targeted) for ASCAT. ASCAT infers tumor ploidy, purity and allele-specific copy number profiles.

Recently updated apps

We updated the following apps from the MSIsensor v0.6 toolkit:

-

MSIsensor scan – a tool for cataloging homopolymers and miscrosatelites sites in the reference genome. It prepares reference for MSIsensor msi.

-

MSIsensor msi – a tool for somatic microsatellite changes detecting and scoring. Designed to work with paired tumor-normal data.

March 11th, 2024

Recently published apps

We’ve published the following new apps on the Seven Bridges Platform:

- FusionInspector (v2.8.0), a tool that performs validation of fusion transcript predictions. FusionInspector is a part of the Trinity Cancer Transcriptome Analysis Toolkit (CTAT). It takes a list of potential fusion genes (obtained by executing any fusion transcript prediction tool), extracts the genomic regions corresponding to the fusion partners, and creates mini-fusion-contigs that hold the gene pairs in the suggested fused orientation. The original reads align to these putative fusion contigs. In the fusion-gene context, fusion-supporting reads that would typically align as split reads or discordant pairs should align as concordant ‘normal’ reads. Reads that span fragments and reads containing fusion breakpoints that support each fusion, are recognized, reported, and scored accordingly.

- Arriba (v2.4.0), a tool for the detection of gene fusions from RNA-Seq data. Arriba is designed to work with STAR aligner-processed data, and the post-alignment runtime is typically a few minutes long. Arriba does not require reducing the –alignIntronMax parameter of STAR to identify fusions resulting from focal deletions, in contrast to many other fusion detection methods that are based on STAR. Its intended application was in the context of clinical research. As such, high sensitivity and fast runtimes were crucial design requirements. Arriba can identify structural rearrangements other than gene fusions that may have clinical significance. These include viral integration sites, internal tandem duplications, whole exon duplications, and truncations of genes (i.e., breakpoints in introns and intergenic regions).

- Arriba draw_fusions.R is an R script that comes with the Arriba gene fusions detection tool. This script produces visualizations of the transcripts involved in predicted fusions that are suitable for publication in terms of quality. For every predicted fusion, it creates a single page in the output PDF file. Each page contains information about the fusion partners, their orientation, the retained exons in the fusion transcript, statistics about the number of supporting reads, and, if the fusion_transcript column has a value, an excerpt of the sequence around the breakpoint.

- Parabricks RNA Pipeline, utilizing Parabricks toolkit v4.2.0. It is used for SNP and Indel discovery from RNAseq input data.

- STAARpipeline PheWAS v0.9.6 which is used for analyzing WGS/WES sequencing data in PheWAS.

- SRA to DRS converter workflow that converts SRA metadata to the DRS format for streamlined genomic data handling. This app is developed to streamline the conversion of Sequence Read Archive (SRA) metadata into Data Repository Service (DRS) URIs. It addresses the challenge faced by researchers in efficiently accessing and utilizing large genomic datasets stored in SRA format. By simplifying and automating this conversion, the app facilitates quicker and more effective genomic data analysis, thus accelerating research in the fields such as disease study and genetic discovery.

Recently updated apps

We also updated the following apps:

- DESeq2 tool (v1.40.1) that performs differential gene expression analysis across two or more study conditions. DESeq2 performs differential gene expression analysis using negative binomial generalized linear models. It analyzes estimated read counts from several samples, each belonging to one of two or more conditions under study, searching for systematic changes between conditions, as compared to within-condition variability.

February 14th, 2024

AlphaFold Visualizer – new public project on the Seven Bridges Platform

We published the AlphaFold Visualizer (v0.1.5) as a public project on the Seven Bridges Platform containing an interactive analysis for the visualization of AlphaFold results. AlphaFold is an AI tool for 3D protein structure prediction. As it produces protein structure models as its output, a secondary analysis is needed for the interpretation and visualization of results. This analysis must be interactive, so it has been developed as the Data Studio AlphaFold Visualizer analysis and it represents a great addition to the publicly available app.

Recently published apps

We have also published new workflows for processing Nanopore data:

- ONT Flowcell Processing – aligns (Minimap2), sorts (Samtools) and quality checks (NanoPlot, Samtools Flagstat, Mosdepth, GATK ComputeLongReadMetrics) input Nanopore data from a single flowcell.

- ONT WGS Variant Calling – merges (Sambamba), calls variants (Clair3, Sniffles2) and quality checks (Mosdepth, NanoPlot) input BAM files from Nanopore data.